Tag Archives: Apollo

Digitizing Apollo 17 Part 14 – A Fantastic Reception

It has been a wild ride since May, when I made public the first alpha release of apollo17.org. Now at v0.6, it’s still an alpha, but has been improved and stabilized over the spring and early summer.

The day the site went live, March 25/2015, Gizmodo picked up the site and I got a massive surge of traffic. Technically everything held together, and I was rewarded with many enthusiastic messages from people experiencing the site for the first time. Super rewarding.

Digitizing Apollo 17 Part 13 – Apollo17.org – Alpha Release v0.1

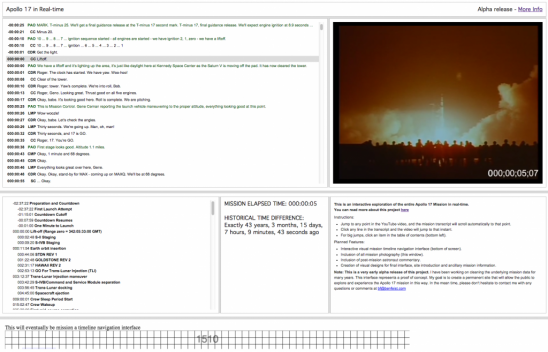

Today I’m happy to announce the public alpha release of Apollo17.org, an interactive explorer that allows you to experience the entire Apollo 17 mission (305 hours long) in real-time. It represents the culmination of the years of mission data cleaning I have blogged about here. My goal is to create a full-featured site that will allow the public to explore and experience the Apollo 17 mission in this way.

Version 0.1 is now active which is just a proof of concept. Currently you need a fast computer and a good internet connection to run the experience. Best viewed in Google Chrome (it hasn’t been tested with any other browsers).

Version 0.1 is now active which is just a proof of concept. Currently you need a fast computer and a good internet connection to run the experience. Best viewed in Google Chrome (it hasn’t been tested with any other browsers).

Digitizing Apollo 17 Part 12 – YouTube Channel of Complete Mission

It has been an eventful beginning to 2015. In my previous post I went over the new mission audio released by NASA. This audio has been merged into the base project and the resulting transcript corrections have been made.

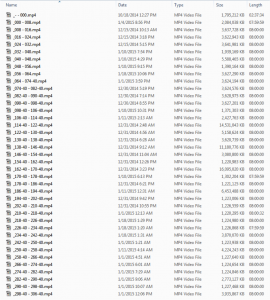

Over the years I have been creating Adobe Premiere videos that contain all mission audio and video. These have taken years to make and I intended to only use them for the purposes of correcting the transcript. However, I recently decided to render them out and upload them to YouTube. The resulting YouTube Channel contains 39 videos that are each 8 hours long. It’s pretty incredible that YouTube can house videos of such long duration. I wonder what people’s reactions will be when they stumble across an 8 hour Apollo Mission video without any context.

Over the years I have been creating Adobe Premiere videos that contain all mission audio and video. These have taken years to make and I intended to only use them for the purposes of correcting the transcript. However, I recently decided to render them out and upload them to YouTube. The resulting YouTube Channel contains 39 videos that are each 8 hours long. It’s pretty incredible that YouTube can house videos of such long duration. I wonder what people’s reactions will be when they stumble across an 8 hour Apollo Mission video without any context.

Much of the video is black with a simple timecode showing Mission Elapsed Time. Without reference to the overall mission or transcripts, the videos leave you a little lost.

Over the coming weeks I plan to create a proof of concept that links the corrected transcript to YouTube video playback.

Digitizing Apollo 17 Part 11 – More mission audio released by NASA

Due to the hard work of Greg Wiseman of the Houston Audio Control Room at the Johnson Space Center, more Apollo 17 mission audio has been digitized and released on the Internet Archive. I’m now in the process of laying in the missing clips into the mission reconstruction project and have resumed correcting the Air-to-Ground transcripts.

Due to the hard work of Greg Wiseman of the Houston Audio Control Room at the Johnson Space Center, more Apollo 17 mission audio has been digitized and released on the Internet Archive. I’m now in the process of laying in the missing clips into the mission reconstruction project and have resumed correcting the Air-to-Ground transcripts.

Digitizing Apollo 17 Part 10 – Manual Transcript Corrections Completed!

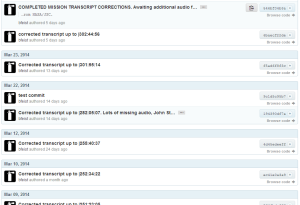

I can’t tell if I’m the tortoise or the hare, but March 31st was a big day. My github logs show that I started the manual step of the transcript correction process December 1/2012, and completed it March 31/2014 (with big summer breaks in there). There are still many hours of missing audio material, but I’ve been in touch with the Johnson Space Center, Houston Audio Control Room and they’ve been very responsive and helpful. They assured me that the missing material was on their list of to-dos, and they’re aiming to start getting the missing material to me within the next few weeks. It feels good to get to the next step. I don’t need the missing material to begin post-processing of the corrected material. As new audio becomes available, it will mean more manual correction, then the re-running of the automated post-processing actions I’m about to write.

I can’t tell if I’m the tortoise or the hare, but March 31st was a big day. My github logs show that I started the manual step of the transcript correction process December 1/2012, and completed it March 31/2014 (with big summer breaks in there). There are still many hours of missing audio material, but I’ve been in touch with the Johnson Space Center, Houston Audio Control Room and they’ve been very responsive and helpful. They assured me that the missing material was on their list of to-dos, and they’re aiming to start getting the missing material to me within the next few weeks. It feels good to get to the next step. I don’t need the missing material to begin post-processing of the corrected material. As new audio becomes available, it will mean more manual correction, then the re-running of the automated post-processing actions I’m about to write.

Digitizing Apollo 17 Part 9 – The Trip Home

It has been far too long since my last update on this enormous project. I’ve been toiling away for the past year and am now only a few months away from the end of the current project phase (reconstructing timecode and correcting the transcript).

Last year I made quite a bit of headway in the colder months. The summer was a 3 or 4 month break (it’s nice and warm outside and I’m involved in other things).

Digitizing Apollo 17 Part 8 – Changing The Clocks

It has been a month or so since my last report on my progress. I have been making consistent headway on correcting the transcript while reassembling the timeline in Adobe Premiere as I go. As of today I’ve corrected the transcript to 112:46:30, which is just after they landed on the Moon and got the GO to stay there.

If you take a look at my project on Github you can see my progress in detail.

Digitizing Apollo 17 Part 7 – Listening in Real Time

I’ve spent many hours now listening in real time to the audio timeline that I have partially reconstructed in Premiere (which I described in my previous post). I’m 21 hours, 48 minutes into the mission (plus the two hours of commentary leading up to the launch). There have been some segments of missing audio and there has been one 6 hour sleep period that contained only occasional Public Affairs Office (PAO) announcements. Continue reading

Digitizing Apollo 17 Part 6 – Timeline Reconstruction

When tabling and OCRing all of the transcript pages, running python cleanup procedures and manually running search and replace rules, I had a lot of time to think about this project and where it might lead. The underlying thought that I kept coming back to is that I’m re-establishing a cohesive chain of events that occurred over a 13 day period, 40 years ago. Starting from this idea of a chain I expanded my investigation to include other media elements in addition to the transcript. I realized that I would have to reconcile all of the audio recorded, film shot, photos taken, and television broadcast during that 13 day period in order to reconstruct the chain of events.

When tabling and OCRing all of the transcript pages, running python cleanup procedures and manually running search and replace rules, I had a lot of time to think about this project and where it might lead. The underlying thought that I kept coming back to is that I’m re-establishing a cohesive chain of events that occurred over a 13 day period, 40 years ago. Starting from this idea of a chain I expanded my investigation to include other media elements in addition to the transcript. I realized that I would have to reconcile all of the audio recorded, film shot, photos taken, and television broadcast during that 13 day period in order to reconstruct the chain of events.

Digitizing Apollo 17 Part 5 – Python Processing

Now that the TEC and PAO transcript data is in pipe-delimited CSV format, I can start to use batch cleansing techniques to further clean the raw OCR output data (CSV) into 2nd phase cleaned CSV. These processes are all automated tasks with no manual intervention. Once again, I did this purposely to keep the string of steps automated all the way from the original OCR steps to the cleaned output CSV in case I ever need to go back and change one of my earlier OCR settings etc. Any manual changes to the data would be wiped out by re-exporting any of the earlier steps.

Digitizing Apollo 17 Part 4 – Technical vs Public Affairs Office

There are two different air-to-ground transcripts associated with the Apollo 17 mission, the TEC or Technical Air-to-Ground transcript, and the PAO or Public Affairs Office transcript. These were both separately transcribed to typewritten pages in 1972 even though they contain 80% overlap.

Continue reading

Digitizing Apollo 17 Part 3 – New OCR Techniques

As discussed in my previous post, The Apollo 17 PDFs contained an early attempt at recognizing the typewritten text using Adobe Acrobat’s built in OCR functionality. Working from Adobe’s OCR output would result in a huge amount of manual labour, which kind of defeats the purpose of using OCR to being with.

Digitizing Apollo 17 Part 2 – Transcript Restoration, A Beginning

If you’re interested in this project, have a quick flip through the PDF of the technical air-to-ground mission audio transcript to get an idea of what the source material is like. The raw PDF document was published courtesy Stephen Garber (NASA HQ) and Glen Swanson (JSC) (55MB PDF). These transcripts were originally typed in 1972 by NASA typists.

Digitizing Apollo 17 Part 1 – Discovering Apollo

In 1997 I was a developer at an advertising agency in the early days of the web world. I built interactive kiosks and cd-roms etc with a great team that I’m still friends with today. At that time internet content was thin, almost everything was about creating infrastructure. It was a technology-lead world. Once a technical infrastructure was made, developers looked around for content to fill it with. Everyone needed “content providers”. The cart was leading the horse.